MIT researchers developed a method that helps a user understand a machine-learning model’s reasoning, and how that reasoning compares to that of a human. Credit: Christine Daniloff, MIT

A new technique compares the reasoning of a machine-learning model to that of a human, so the user can see patterns in the model’s behavior.

In machine learning, understanding why a model makes certain decisions is often just as important as whether those decisions are correct. For instance, a machine-learning model might correctly predict that a skin lesion is cancerous, but it could have done so using an unrelated blip on a clinical photo.

While tools exist to help experts make sense of a model’s reasoning, often these methods only provide insights on one decision at a time, and each must be manually evaluated. Models are commonly trained using millions of data inputs, making it almost impossible for a human to evaluate enough decisions to identify patterns.

Now, researchers at

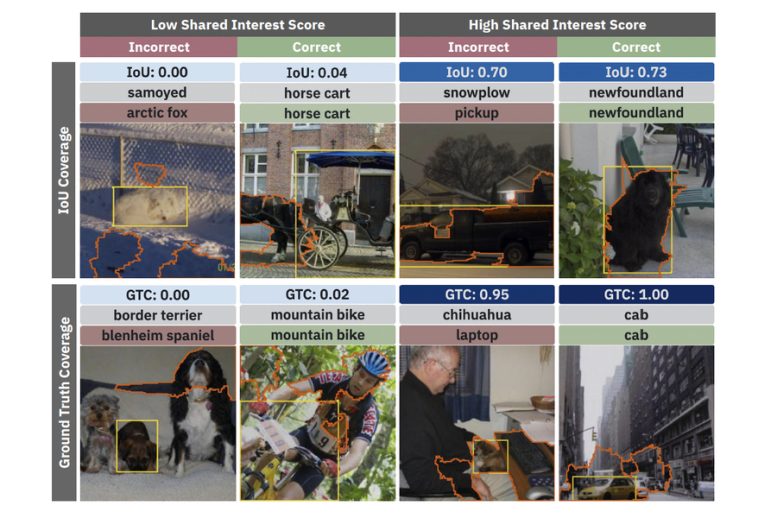

Researchers developed a method that uses quantifiable metrics to compare how well a machine learning model’s reasoning matches that of a human. This image shows the pixels in each picture that the model used to classify the image (surrounded by the orange line) and how that compares to the most important pixels, as defined by a human (surrounded by the yellow box). Credit: Courtesy of the researchers

Rapid analysis

The researchers used three case studies to show how Shared Interest could be useful to both nonexperts and machine-learning researchers.

In the first case study, they used Shared Interest to help a dermatologist determine if he should trust a machine-learning model designed to help diagnose cancer from photos of skin lesions. Shared Interest enabled the dermatologist to quickly see examples of the model’s correct and incorrect predictions. Ultimately, the dermatologist decided he could not trust the model because it made too many predictions based on image artifacts, rather than actual lesions.

“The value here is that using Shared Interest, we are able to see these patterns emerge in our model’s behavior. In about half an hour, the dermatologist was able to make a confident decision of whether or not to trust the model and whether or not to deploy it,” Boggust says.

In the second case study, they worked with a machine-learning researcher to show how Shared Interest can evaluate a particular saliency method by revealing previously unknown pitfalls in the model. Their technique enabled the researcher to analyze thousands of correct and incorrect decisions in a fraction of the time required by typical manual methods.

In the third case study, they used Shared Interest to dive deeper into a specific image classification example. By manipulating the ground-truth area of the image, they were able to conduct a what-if analysis to see which image features were most important for particular predictions.

The researchers were impressed by how well Shared Interest performed in these case studies, but Boggust cautions that the technique is only as good as the saliency methods it is based upon. If those techniques contain bias or are inaccurate, then Shared Interest will inherit those limitations.

In the future, the researchers want to apply Shared Interest to different types of data, particularly tabular data which is used in medical records. They also want to use Shared Interest to help improve current saliency techniques. Boggust hopes this research inspires more work that seeks to quantify machine-learning model behavior in ways that make sense to humans.

Reference: “Shared Interest: Measuring Human-AI Alignment to Identify Recurring Patterns in Model Behavior” by Angie Boggust, Benjamin Hoover, Arvind Satyanarayan and Hendrik Strobelt, 24 March 2022, arXiv. DOI: https://doi.org/10.48550/arXiv.2107.09234

This work is funded, in part, by the MIT-IBM Watson AI Lab, the United States Air Force Research Laboratory, and the United States Air Force Artificial Intelligence Accelerator.