ETH Computer scientists have developed a new AI solution that enables touchscreens to sense with eight times higher resolution than current devices. Thanks to AI, their solution can infer much more precisely where fingers touch the screen.

Quickly typing a message on a smartphone sometimes results in hitting the wrong letters on the small keyboard or on other input buttons in an app. The touch sensors that detect finger input on the touch screen have not changed much since they were first released in mobile phones in the mid-2000s.

In contrast, the screens of smartphones and tablets are now providing unprecedented visual quality, which is even more evident with each new generation of devices: higher color fidelity, higher resolution, crisper contrast. A latest-generation iPhone, for example, has a display resolution of 2532×1170 pixels. But the touch sensor it integrates can only detect input with a resolution of around 32×15 pixels—that’s almost 80 times lower than the display resolution: “And here we are, wondering why we make so many typing errors on the small keyboard? We think that we should be able to select objects with pixel accuracy through touch, but that’s certainly not the case,” says Christian Holz, ETH computer science professor from the Sensing, Interaction & Perception Lab (SIPLAB) in an interview in the ETH Computer Science Department’s “Spotlights” series.

Together with his doctoral student Paul Streli, Holz has now developed an artificial intelligence (AI) called CapContact that gives touch screens super-resolution so that they can reliably detect when and where fingers actually touch the display surface, with much higher accuracy than current devices do. This week they presented their new AI solution at ACM CHI 2021, the premier conference in on Human Factors in Computing Systems.

Recognising where fingers touch the screen

The ETH researchers developed the AI for capacitive touch screens, which are the types of touch screens used in all our mobile phones, tablets, and laptops. The sensor detects the position of the fingers by the fact that the electrical field between the sensor lines changes due to the proximity of a finger when touching the screen surface. Because capacitive sensing inherently captures this proximity, it cannot actually detect true contact—which is sufficient for interaction, however, because the intensity of a measured touch decreases exponentially with the growing distance of the fingers.

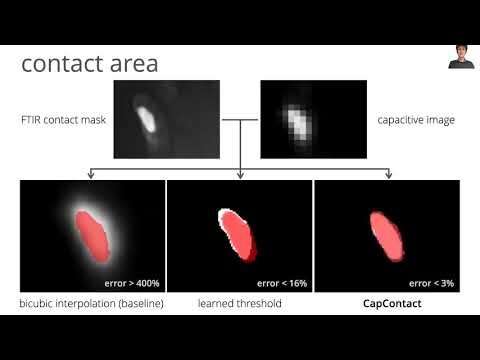

Capacitance sensing was never designed to infer with pinpoint accuracy where a touch actually occurs on the screen, says Holz: “It only detects the proximity of our fingers.” The touch screens of today’s devices thus interpolate the location where the input is made with the finger from coarse proximity measurements. In their project, the researchers aimed to address both shortcomings of these ubiquitous sensors: On the one hand, they had to increase the currently low resolution of the sensors and, on the other hand, they had to find out how to precisely infer the respective contact area between finger and display surface from the capacitive measurements.

Consequently, CapContact, the novel method Streli and Holz developed for this purpose, combines two approaches: On the one hand, they use the touch screens as image sensors. According to Holz, a touch screen is essentially a very low-resolution depth camera that can see about eight millimeters far. A depth camera does not capture colored images, but records an image of how close objects are. On the other hand, CapContact exploits this insight to accurately detect the contact areas between fingers and surfaces through a novel deep learning algorithm that the researchers developed.

“First, “CapContact’ estimates the actual contact areas between fingers and touchscreens upon touch,” says Holz, “Second, it generates these contact areas at eight times the resolution of current touch sensors, enabling our touch devices to detect touch much more precisely.”

To train the AI, the researchers constructed a custom apparatus that records the capacitive intensities, i.e., the types of measurements our phones and tablets record, and the true contact maps through an optical high-resolution pressure sensor. By capturing a multitude of touches from several test participants, the researchers captured a training dataset, from which CapContact learned to predict super-resolution contact areas from the coarse and low-resolution sensor data of today’s touch devices.

Low touch screen resolution as a source of error

“In our paper, we show that from the contact area between your finger and a smartphone’s screen as estimated by CapContact, we can derive touch-input locations with higher accuracy than current devices do,” adds Paul Streli. The researchers show that one-third of errors on current devices are due to the low-resolution input sensing. CapContact can remove these errors through the researcher’s novel deep learning approach.

The researchers also demonstrate that CapContact reliably distinguishes the touch surfaces even when fingers touch the screen very close together. This is the case, for example, with the pinch gesture, when you move your thumb and index finger across a screen to enlarge texts or images. Today’s devices can hardly distinguish close-by adjacent touches.

The findings of their project now put the current industry standard of touchscreens into question. In another experiment, the researchers used an even lower-resolution sensor than those installed in our mobile phones today. Nevertheless, CapContact detected the touches better and was able to derive the input locations with higher accuracy than current devices do at today’s regular resolution. This indicates that the researchers’ AI solution might pave the way for a new touch sensing in future mobile phones and tablets to operate more reliably and precisely, yet at a reduced footprint and complexity in terms of sensor manufacturing.

To allow others to build on their results, the researchers are releasing their trained deep learning model, code, and their dataset on their project page.